From the Placeholder Investment Thesis Summary, Section 1:

"Information technology evolves in multi-decade cycles of expansion, consolidation and decentralization. Periods of expansion follow the introduction of a new open platform that reduces the production costs of technology as it becomes a shared standard. As production costs fall, new firms come to market leveraging the standard to compete with established incumbents, pushing down prices and margins, and decentralizing existing market powers.

The price drop attracts new users, increasing the overall size of the market and creating new opportunities for mass consumer applications. Entrepreneurial talent moves to serve the new markets where costs are low, competition is scarce, and the upside is high. Often these early entrepreneurs will introduce new kinds of business models, orthogonal to existing ones.

Those who succeed the most and establish successful platforms “on top” of the open standard later tend to consolidate the industry by leveraging their scale (in assets and distribution) to integrate vertically and expand horizontally at the expense of smaller companies. Competing in this new environment suddenly becomes expensive and startups struggle to create value in the shadow of incumbents, compressing venture returns.

Demand then builds for a low cost, open source alternative to the incumbent platforms, and the cycle repeats itself: the new open standard emerges and gets adopted, the market decentralizes as new firms leverage the cost savings to compete with the old on price, value creation shifts upwards (once more), and so on."

Today, we'll look at some of the history that informed this first section of the thesis.

Transistors helped transform Information Technology into an industry. These small, reliable easy-to-make devices (commercialized in 1947) collapsed the cost of implementing logic circuitry by replacing the expensive and inefficient vacuum tubes used in early computer systems. It took another 30 years for computers to become somewhat affordable (and then another 30 to put one in everyone’s pocket), but standardization around the transistor, across the entire field of electronics, pushed down costs enough to allow companies like IBM to build a large computer business in the 50’s and 60’s.

IBM effectively created the computer market in 1953 with the IBM 650 and later consolidated it around System/360 throughout the 60’s. The 650 had to be configured for each customer’s individual requirements (expensive); System/360 was a modular platform of interchangeable components with a somewhat general-purpose computer at its core (cheaper). Modularity allowed for additional scale in production, lower costs and putting more computers in the market.

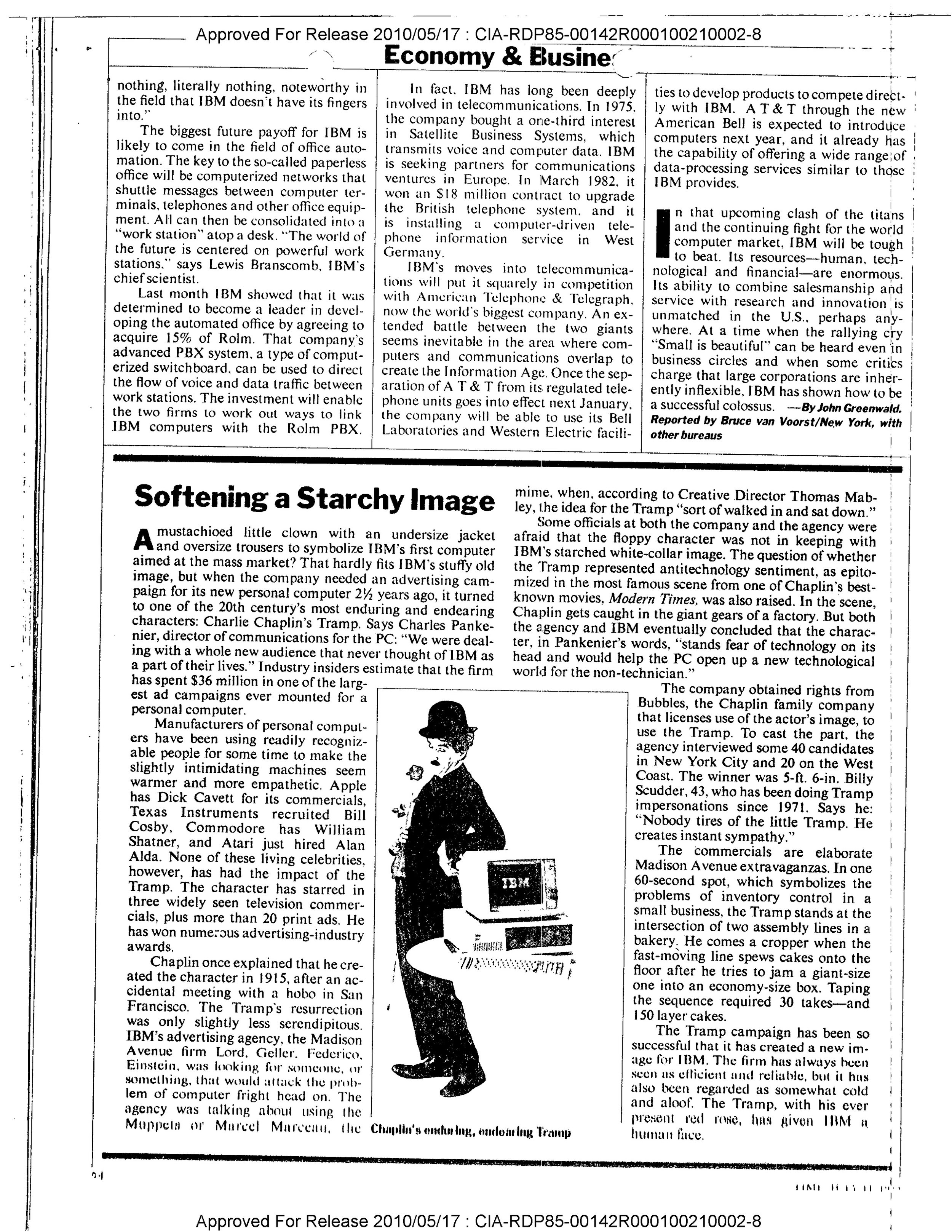

It didn’t take long for System/360 to become the industry standard, giving IBM effective control over the industry. Its leverage was in designing, building, and selling each new System/360 implementation, faster than anyone else. Even though the 360 was fairly modular, it was difficult for other mainframe companies to compete against IBM on their own turf. IBM was 2/3rds of the computer market by the end of the 60’s; the balance belonged to its seven dwarfs (also referred to as the BUNCH). IBM had become the biggest computer company in the world.

By 1969 there was a conversation – and a lawsuit – around Big Blue’s growing monopoly. U.S. v. IBM went on for 13 years, but enough market pressure had built up in ‘69 to push IBM into unbundling its business by pricing hardware and software separately, accidentally creating the software services industry.

Source: http://ethw.org/Early_Popular_Computers,_1950_-_1970

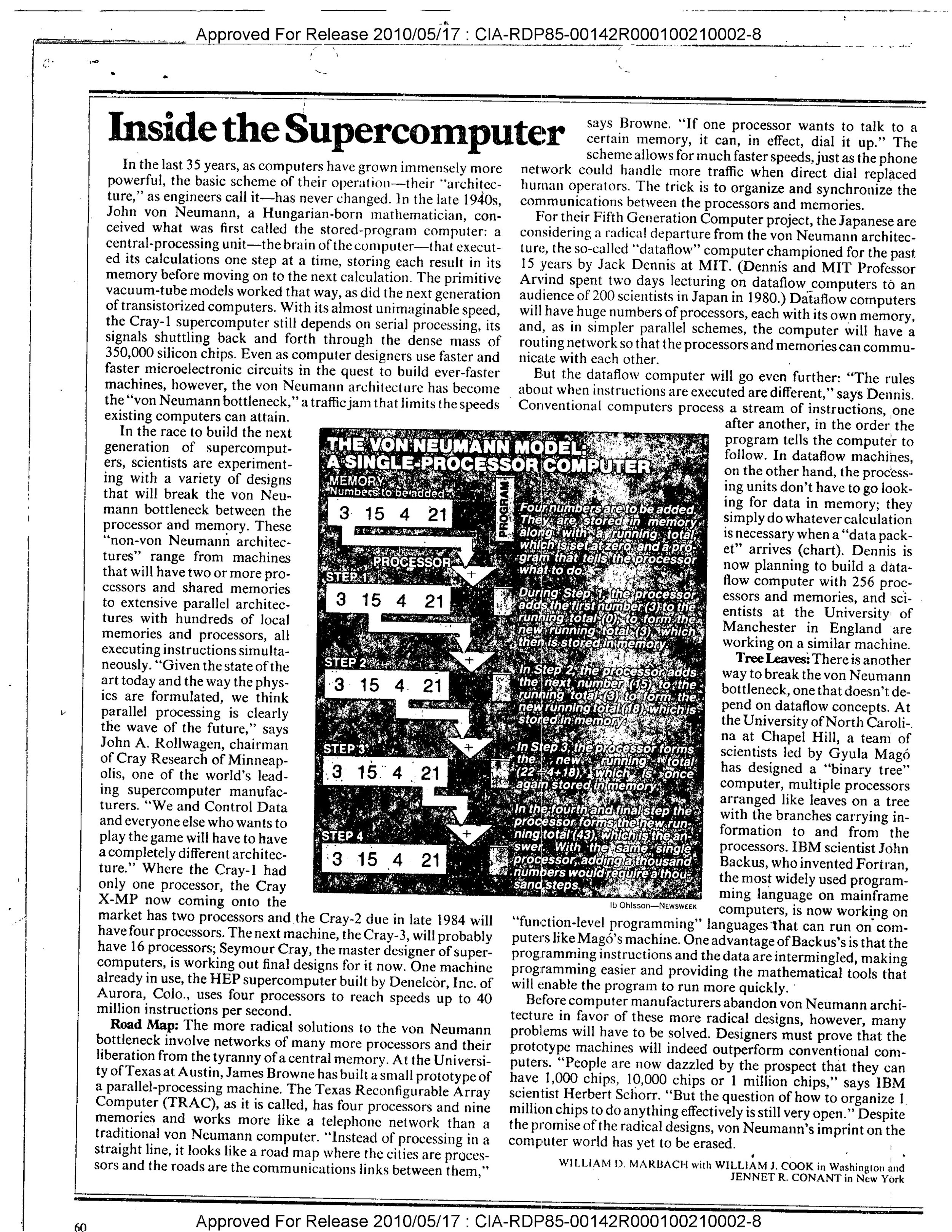

1969 was also the year Intel designed the first microprocessor, the 4004, released in 1971. Before then, CPUs were a collection of discrete components and specialized circuits, meaning new use cases (or markets) required new implementations. Microprocessors consolidated (almost) all processing functions into a single, general-purpose integrated circuit, basically by separating application logic (the program) from processing logic (the computer), as opposed to custom-building both.

As with transistors, standardization around the microprocessor collapsed the cost of building computers by eliminating the need to build bespoke processing systems for each new use case. The ensuing cost decline re-opened the market by inviting competition and bringing down prices, exposing the industry to a larger potential user base. It also de-leveraged IBM, whose business depended on custom mainframe implementations. Microprocessors were easier to manufacture at scale, and as hardware components standardized around it, building a fast, general purpose computer didn’t take much.

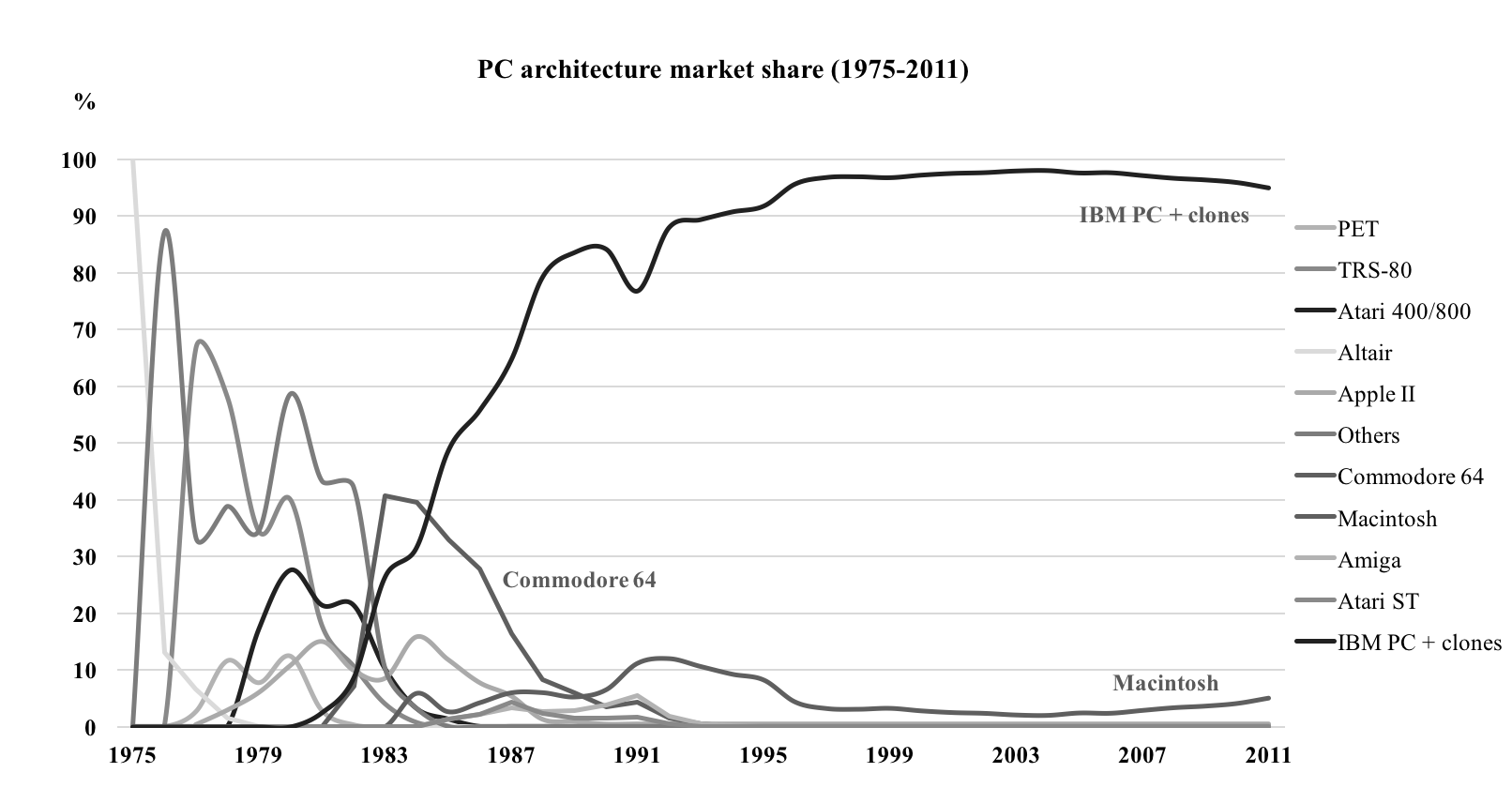

The 70’s saw an explosion of new computer manufacturers offering smaller, cheaper and faster micro and mini-computers. We went from a single computer manufacturer to dozens, all over the world, all assembling interoperable computers using standard components.

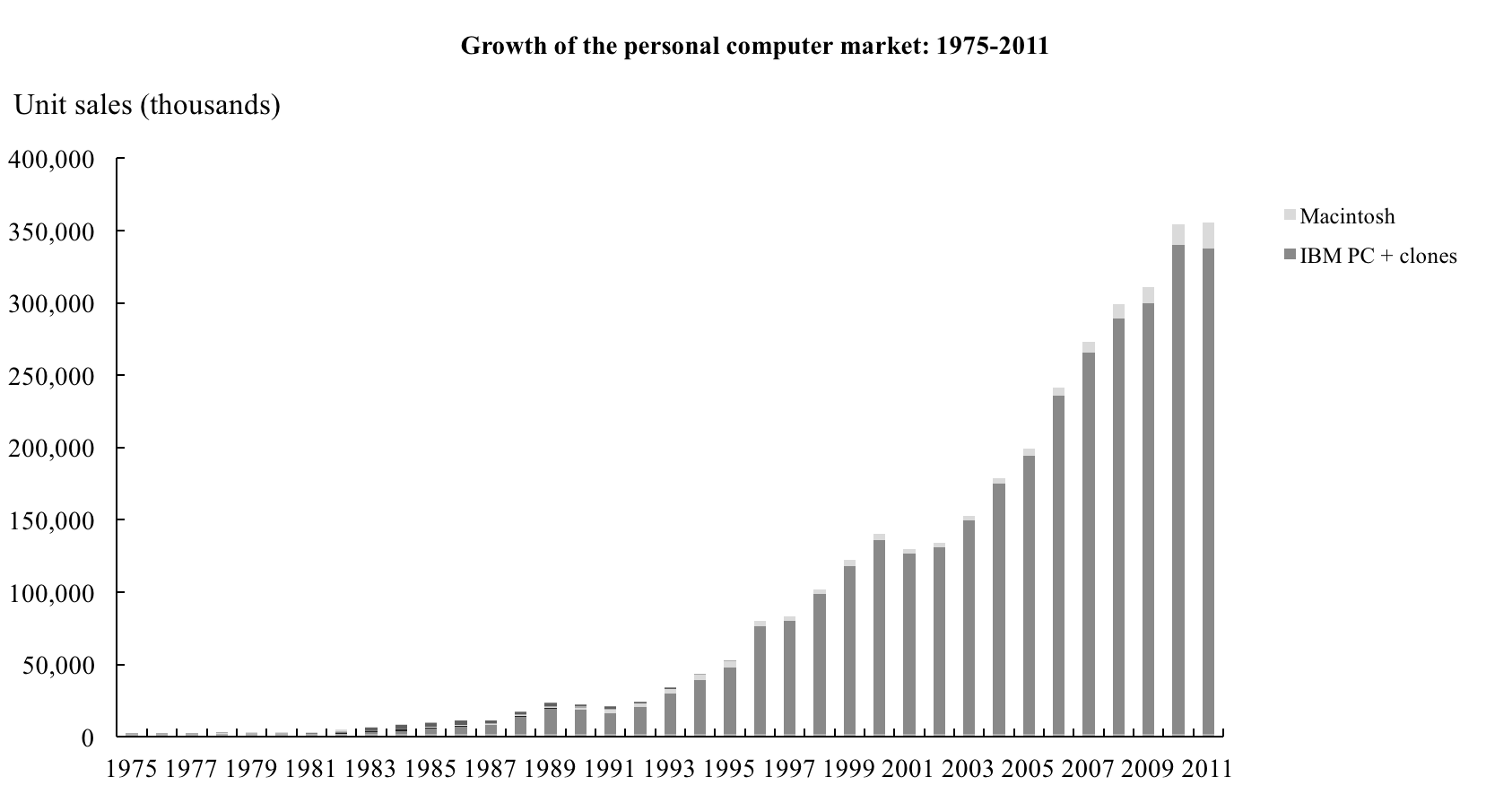

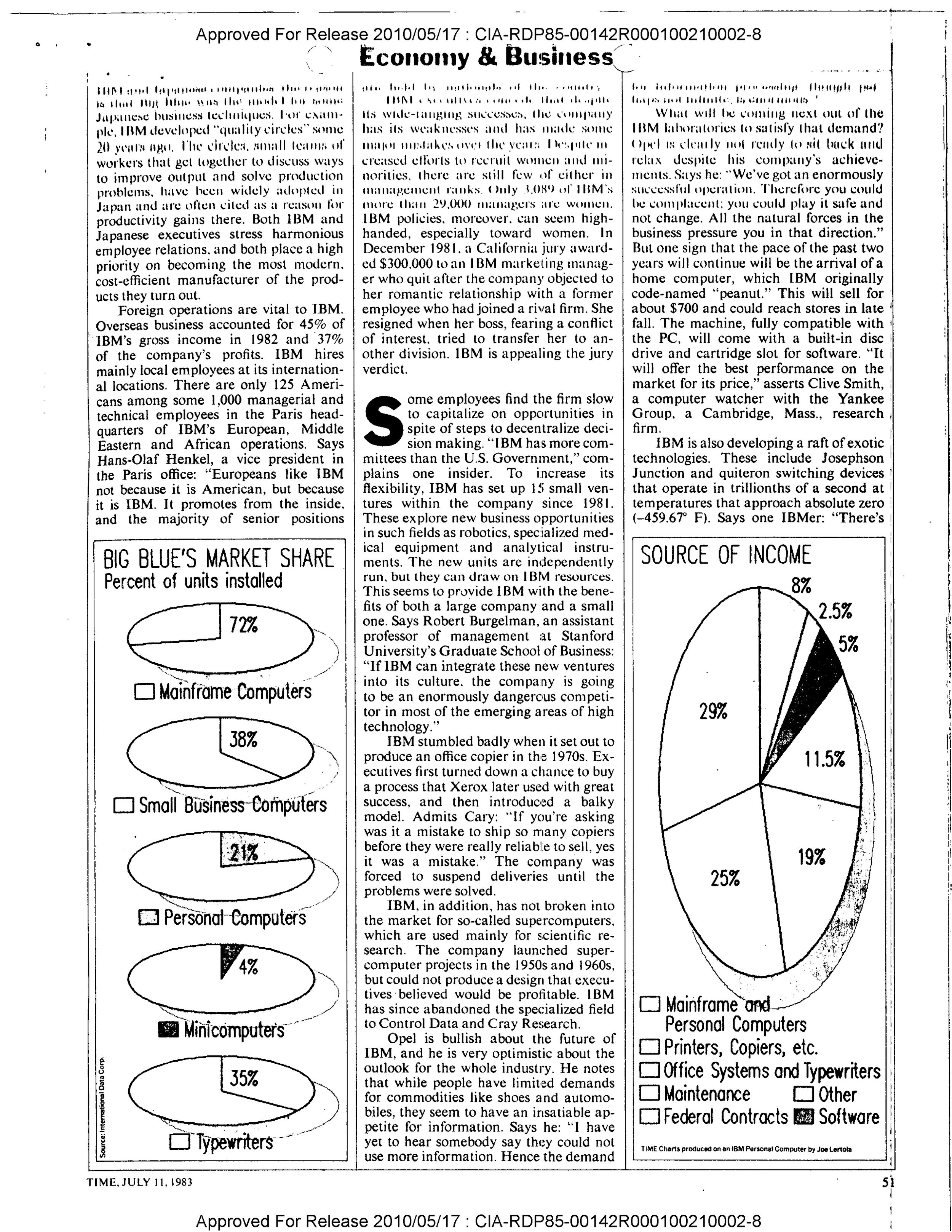

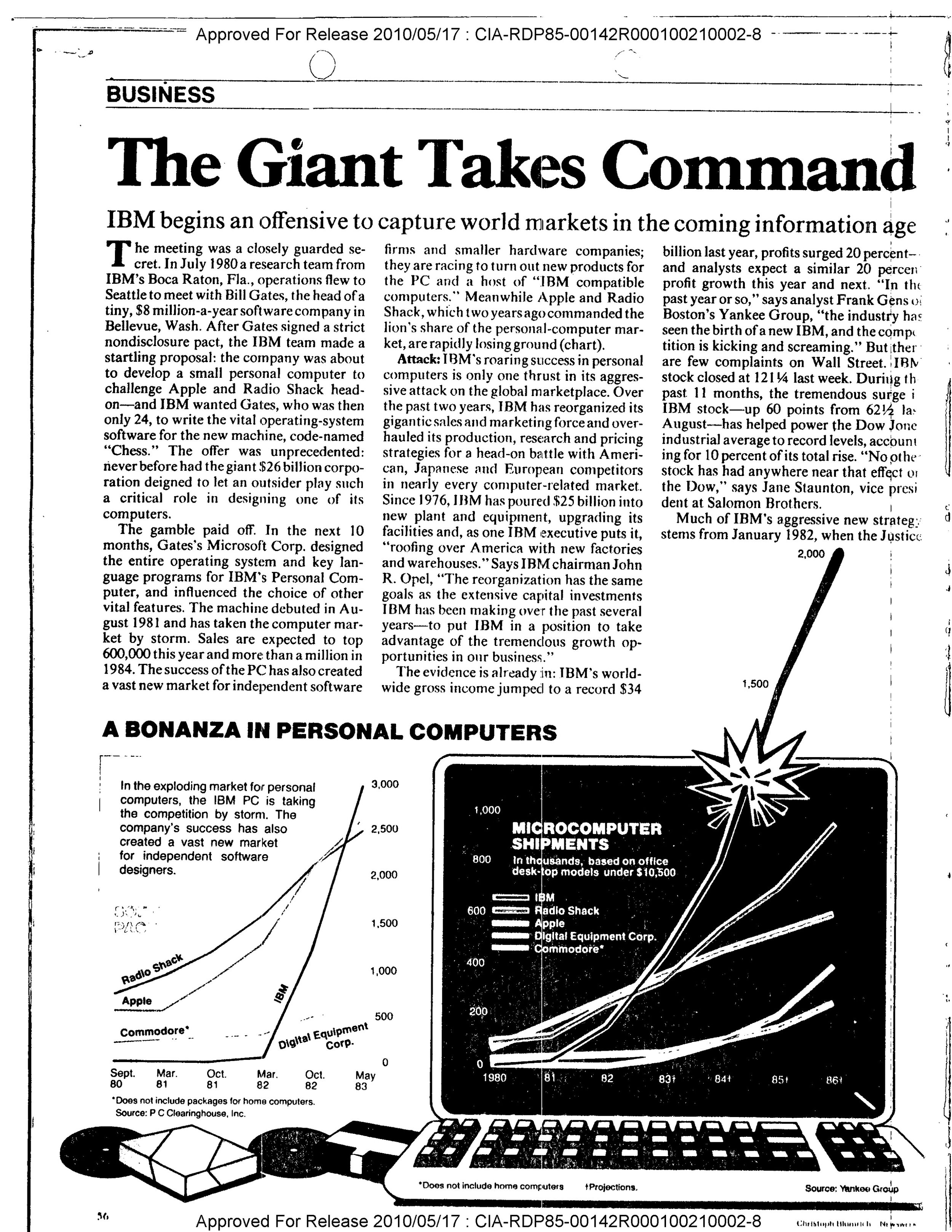

Sales volume and growth (in thousands of units) of early microprocessor-based computers. Source: http://jeremyreimer.com/m-item.lsp?i=137

IBM remained a successful business through the 70’s and 80’s, but its influence waned. After 10 years of denial, in 1980 it launched the IBM PC, its first computer to use an Intel microprocessor. It was a quick and massive success, but because it was now difficult to differentiate on hardware (since everyone had access to the same commodity components), it was quickly cloned, and it became difficult to remain at the forefront of the industry as a single manufacturer among dozens building the same product. “Hardware” was no longer an edge, and new value creation moved “up the stack,” to the software layer.

Sales of microprocessor-based computers (in thousands of units) after the release of the IBM PC. Source: http://jeremyreimer.com/m-item.lsp?i=137

As microprocessors unbundled the computer business throughout the 70s, demand for a standard operating system grew. Computers became cheaper and new customers entered the marketplace. Demand for software tools boomed, but it was expensive for small, independent software vendors to support the various OEM operating systems – just as it was expensive for startup computer manufacturers to build their own OS.

Unix got its name at Bell Labs in 1970 and soon after it became a key piece of software infrastructure. It was free, open source, and easy to port and modify. So it got pulled into pretty much every new PC project in some shape or form. In fact, Microsoft’s first operating system offering in 1980 was actually a variant of Unix called Xenix.

1980 was also the year Microsoft signed that contract with IBM which made MS-DOS, which was based on CP/M and not Unix, the standard operating system in the upcoming IBM PC. This set the stage for Microsoft to become the dominant operating system of the PC era as DOS (and later Windows) was almost immediately compatible with the flurry of PC clones that flooded the market. Distribution is everything in the software business, and IBM gave it all to Bill Gates.

Data source: http://jeremyreimer.com/m-item.lsp?i=137

As the PC took off, so did Microsoft. The first version of Windows came out in 1985 and a year later Microsoft went public with $198M in revenues. They almost doubled to $348M in 1987. Software vendors everywhere had no choice but to build for Windows.

The 90’s was a tough time to be in the PC software business. Having already captured 80% of the market for operating systems, Microsoft began consolidating the entire PC software industry in pursuit of higher profits. Leverage in the software business came as a combination of application functionality and retail distribution. Microsoft had an unfair advantage in both. Monopoly ownership of the operating system allowed them to clone and build features others could not by closing third-party access to certain APIs, and a large-scale retail network combined with lock-in contracts with PC manufacturers made it practically impossible for startups to compete. Microsoft could put more CD’s, with better software, in more shelves around the world than anybody else.

Charts tell it best. Here’s Microsoft taking over the word processing business from WordPerfect:

Source: http://www.utdallas.edu/~liebowit/book/wordprocessor/word.html

And here’s Microsoft taking over the Spreadsheets business from Lotus:

https://web.archive.org/web/20021203193842/http://www.utdallas.edu/~liebowit/book/sheets/sheet.html

By 1991, more than half of Microsoft’s revenues came from the applications business, and by 1997, we’d essentially gone from thousands of PC software companies to one, at least in terms of market share. That year, a decade after its IPO, Microsoft did $11.4 billion in revenues. By 2000 it was $20 billion. As far as the market was concerned, Microsoft was the PC software industry.

The world’s first PC modem came out in 1977, TCP/IP in 1978, and Usenet – the first online social network – in 1980. DNS came out in 1984, and in 1989, the first commercial internet provider, The World, went up and Tim Berners-Lee first proposed a series of application-layer protocols (including HTTP) which led to the creation of the World Wide Web.

In 1983, the US government (through ARPANET) made TCP/IP the standard Internet protocol. Computer networks had been around for a while, but they were proprietary, expensive to build, and incompatible with each other. Everyone used different protocols and networking equipment.

The Internet would not have been possible without these open standards. The sheer cost of building and supporting a single, reliable, computer-based telecommunications network of global scale is impossible to bear for a single entity, not to mention it would’ve created a great deal of centralized risk. Standardization around the open and free internet protocol suite collapsed the cost of building a computer network by eliminating the need to develop networking technology from scratch. More significant was the fact that networks which used TCP/IP were interoperable with each other. Enabling disparate, independently organized local networks to talk to each other distributed the investment necessary to scale the service, and decentralizing the supply side made TCP/IP resilient.

It all really came together in 1991 when Tim Berners-Lee finally announced the WWW to the public, the NSF opened the Internet for commercial use, and Linus Torvalds released the first version of Linux. The web introduced a series of application protocols built on top of TCP/IP that made it easier for developers to build Internet applications; HTTP for linking documents across the network, FTP for transferring files, IMAP/SMTP for email, and so on. HTML came along in 1993, and the Netscape browser in 1994. The dot-com bubble started in 1995, and by 1996 there were some 16 million internet users, or about 0.4% of the world’s population.

Linux was more important than it seemed. We cannot understate its role the success of the web. As an open source alternative to Windows, it never really became a direct-to-consumer success. But it did capture developers with its OSS principles, Unix-like malleability and growing community. More Bazaar than Cathedral. It was just as decentralized and kind-of-messy as the web.

The combination of a free, open operating system and a free, open communications network directly challenged Microsoft’s business model. We went from proprietary software running on a proprietary operating system, physically distributed, to free software, built for Linux, distributed via the internet in the form of a website. Without Goliath in the middle, developers competed on more equal and fertile grounds.

Indeed, Microsoft was early to recognize the potential of a global telecommunications network. In 1995, they tried to launch their own. When that failed (MSN was proprietary; the Internet was free), they tried to monopolize the web browser. And when that failed, towards the end of the 90’s, they picked a fight with Linux and open source.

Meanwhile, dot-coms bubbled. Driving the boom was the rate at which new users were coming online. From 1995 to 2000, the number of internet users grew twenty times from ~16 million to ~300 million, creating a sense of urgency around the opportunity to profit from the Web, but supply got ahead of itself. Companies and investors overestimated how quickly web businesses would capture market share, raised too much money too early, and spent it all without ever building revenues to make up the difference.

The bubble itself peaked at around $3 trillion before crashing to $1.2 trillion in 2000. “It’s not a correction, it’s a crash” Fred told CNN at the time. Most companies were 70-90% down and a bit over half half of them disappeared. But out of the crash we got the most important companies on the web (Google, Amazon, eBay, Netflix...), and more importantly, the number of internet users worldwide continued to grow at a consistent rate in spite of the market turmoil..

From 2000-2010, the number of internet users grew from ~400 million to over 2 billion, reaching about 30% of the world’s population. Server infrastructure standardized, speed and bandwidth increased, programming languages became more accessible, open source development platforms emerged, cloud computing provided scale on the cheap, and so on.

A new period of growth began in 2004 with Google’s $23 billion IPO, and over time the capital requirements for a typical web business decreased to the point where many of the wild ideas of the 90’s started making sense. Fundamentals came back, investment capital returned, and the decade leading up to 2014 saw remarkable levels of innovation as the number of internet users surpassed 3 billion. Today, in 2018, about half the world’s population is connected to the Internet.

As the market developed, we came to understand the business models of the web. Digital advertising, take-rate marketplaces, SaaS, et al. brought more and more revenue as users continued to switch to online services. The new incumbents – Google, Apple, Facebook and Amazon – achieved unprecedented levels of scale. Together, as of 2/18/2018, they’re worth over $2.85 trillion. That is an astonishing number, and half of us aren’t even online yet.

The Centralization of the Web

We’re in the middle of a massive consolidation period driven by Google, Apple, Facebook and Amazon. Like Microsoft and IBM before them, these companies are leveraging their proprietary user networks and massive data sets to expand both vertically and horizontally at an unprecedented pace. The startup scene has been feeling this for a while. Competition has gotten fierce, particularly in the last 5 years. Users are starting to feel it too, as the inherent risks of centralized networks begin to reveal themselves.

Today, as the Times reveals one of the many ways in which Amazon is trying to control the underlying infrastructure of the economy, it’s asking just how evil is tech?. Meanwhile, early web natives are struggling with the changes brought about by Silicon Valley's “tax-avoiding, job-killing, soul-sucking machine”.

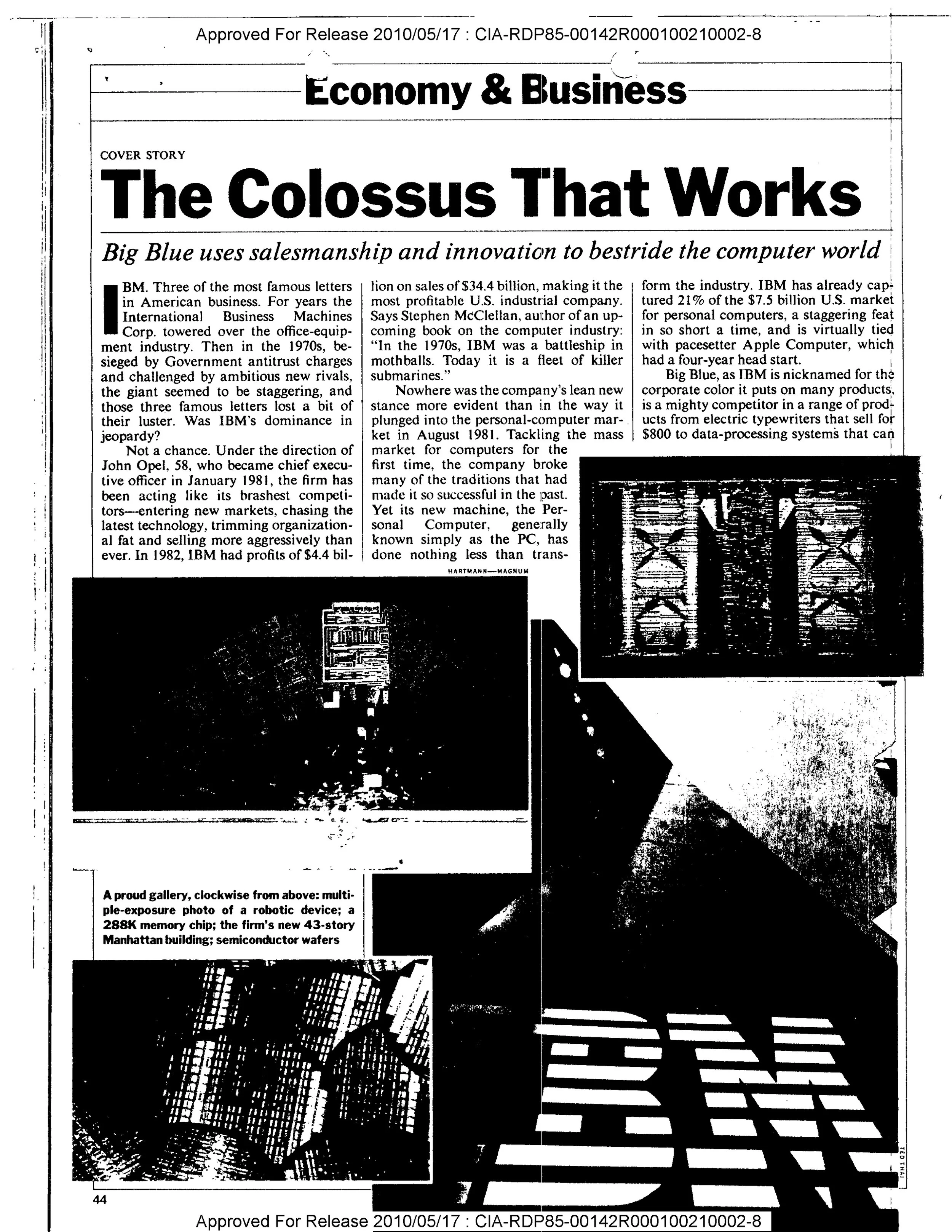

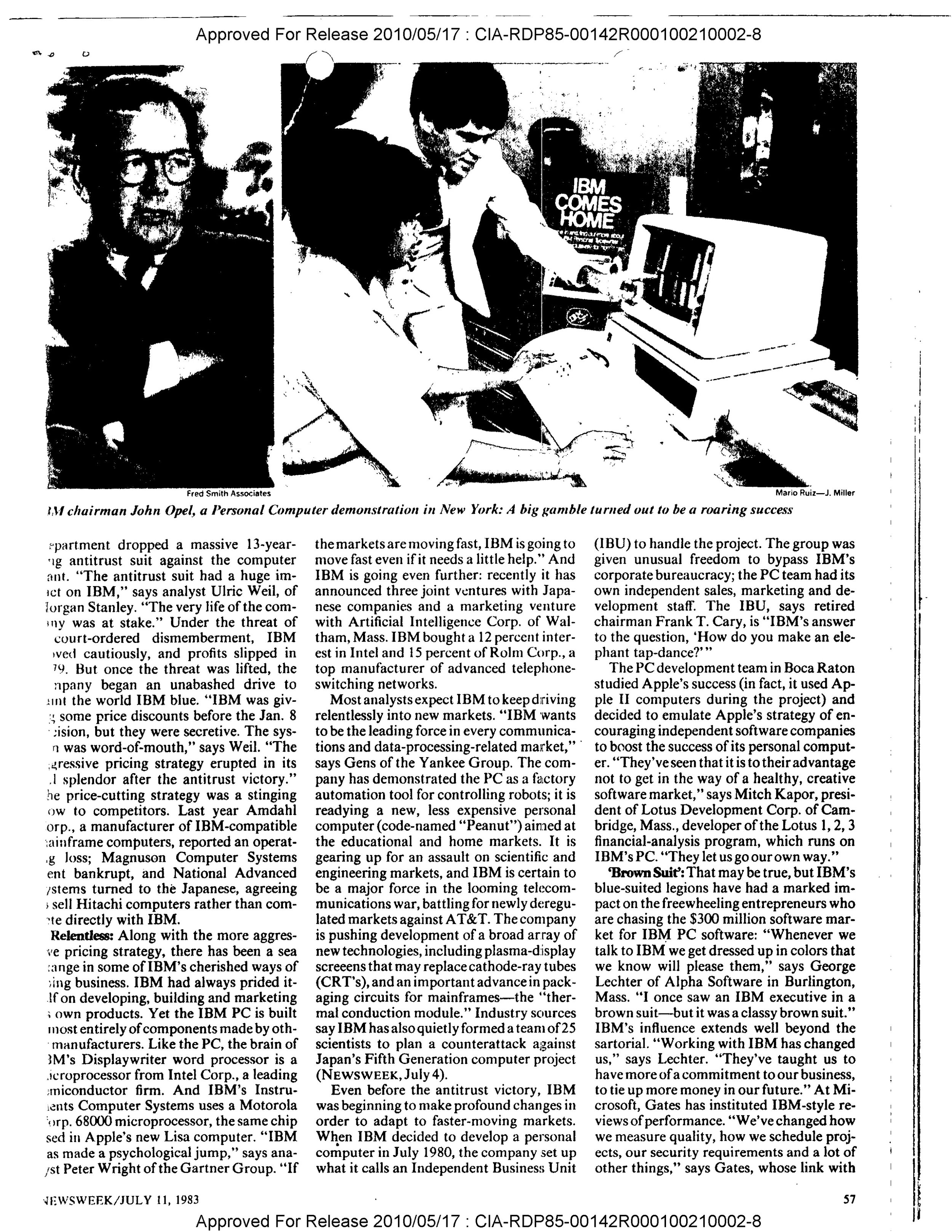

A change in tone, in the media, is worth paying attention to. Incumbents are easy targets during times of consolidation. There’s the 1983 example above, where the New York Times called IBM a colossus, and countless other articles and features expressing concern for its monopolistic power. Microsoft went through the exact same thing in the 80’s and 90’s. Today’s incumbents are beginning to face these issues.

While we ask ourselves if they’re getting too big, startups – even the largest and most successful ones – are about to get squashed by incumbents. Spotify vs. Apple Music, Snapchat vs. Facebook, Amazon vs. The World, etc. feels a lot like WordPerfect vs. Microsoft Word in 1990 or Lotus vs. Excel in ‘91.

About-to-go-public Spotify is growing at a fantastic rate, SNAP continues to struggle in the face of Facebook’s appetite for all our social interactions. Snapchat’s 166 million daily active users didn’t prevent Facebook from amassing over 250 million users just a year after its launch.

Amazon Basics is all about leveraging proprietary supply and demand data to outcompete the very sellers using Amazon’s platform, not to mention the cutthroat fight its picked with Netflix.

The list, of course, goes on.

Those who own the platform ultimately win. Like PC software companies 20 years ago, it’s tough being in the web business today. We can’t afford competing with GAFA. They’ve already won this game. Let’s play a different one.

Original: https://gateway.ipfs.io/ipfs/QmfUSPGUZ5LNd1bM5RpT4cT9aUP3SGSCyN1FWi4ootjTbe/

![[placeholder] short deck (dragged).png](https://images.squarespace-cdn.com/content/v1/5a717b03f43b55ecc784849f/1519129908214-UND1T6MIR57RNJE9LBYT/%5Bplaceholder%5D+short+deck+%28dragged%29.png)